The Velvet HDR Underground. HDR, DRI, LDR, Black Card, Layer Masking compared.

|

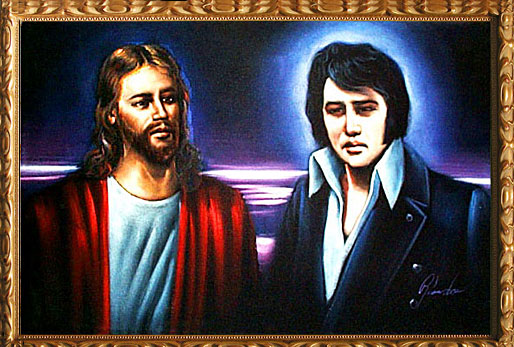

| Figure A: Kitschy yet iconic at the same time! |

Regardless, HDR was invented in the 50's for a very important and specific reason which was to image difficult to visualize subjects like what it looked like in the heart of an atomic explosion with the entire image of the explosion intact. It has other applications, specifically one of those reasons is to simulate what the human eye sees and not what a camera sensor or even film sees.

The human eye is actually quite a sophisticated imaging device. It has the ability to see well in the dark while at the same time it also sees what's in the light. This is all simultaneously and mostly instantly. The problem with most photographs is that the medium isn't able to render the same range as what the human eye can see. The big misconception by many is that the camera sensor or film cannot see more than what it represents. The recording medium can see quite a bit more than what one might think it can see (at least with modern sensors and to dispel the notion that film has a way higher dynamic range than modern sensors; most modern sensors have a dynamic range of 10-12 stops, while film has a range of 8-12 stops). The real problem is the display medium that we view them on. I won't go into details about the differences between additive and reflective light/colour because there's plenty of articles online about colour theory.

In a nutshell, reflective colour or photographic display medium can only display a certain range of light and colour to maintain a certain level of smooth photo-realism (when the medium is incapable of displaying the range you'll see banding in things like blue skies or worse, blown out highlights). One of the tricks to 'increase' this range is to employ a technique called tone compression. This is what this article is going to mainly focus on.

|

| Figure B: Typical exposure - balanced for the entire scene but doesn't represent what my eyes really saw. |

|

| Figure C: Single RAW file compensated by ±2 stops in exposure. |

|

| Figure D: Three bracketed exposures. Note the differences in the rocks on the left to figure C and the sunset colours and cloud details on the right. |

|

| Figure E: HDR Efex Pro from Nik Software combines all exposures together to create an image that comes close to what the human eye sees. |

Another option to traditional tone-mapping is using something called exposure fusion found in programs like Photomatix. Although Photomatix traditionally is an HDR program, exposure fusion is actually not HDR but rather it is LDR (low dynamic range sometimes referred to as DRI or Dynamic Range Increase). Here's a great link on the subject but basically like HDR in that it combines images, it uses a much better algorithm that compares the multiple images and takes the best balance of saturation, contrast, exposure and recombines the images. Unlike HDR images which tend to have a certain fuzziness or glow about it (hence the velvet painting feel), exposure fusion keeps things sharp looking and much more balanced. Figure F demonstrates how fusion works.

|

| Figure F: Exposure Fusion via Photomatix Pro. Note the details in the sunset and the rock details. |

Beyond HDR or Fusion

One thing I hope to clear up is that you don't need specialized HDR software to get your images to get this appearance.

One of the more typical examples of compressing or balancing your scene is to employ the use of gradient filters while shooting your images. Grad filters do this by slowing the exposure time down in a specific area of your scene. Think of this as the tinted top portion of your vehicle windshield (or even sunglasses). By darkening the scene in the bright areas, you can compensate your exposure settings so your shadow areas get more time to expose. This can also be done easily through Photoshop or Lightroom through the gradient filter option (figure G).

|

| Figure G: A soft gradient effect |

|

| Figure H: Black Card Exposure |

Sometimes in the end, nothing is better than just doing a layer blend of multiple images within Photoshop. Figure I demonstrates the combination of using bracketed exposures, layer blending, and some other minor adjustments done in Lightroom. Of course it does mean you need to set up for three exposures to do so and some knowledge of Photoshop.

|

| Figure I: Exposure blending, layer masking of 3 bracketed images. |

I personally have enjoyed using black card to do most of my work. I certainly am comfortable with using all sorts of software, but in the end to me the most important aspect is achieving the closest possible image to what my eyes adjusted and saw. Your RAW images from your camera is certainly more than capable of capturing that kind of vision without resorting to HDR or multiple exposures (Figure J), but it of course means you expose your image correctly so you can do the necessary recovery in the shadow and highlight details at a later date.

|

| Figure J: Sometimes you can just use your original image and just do all the corrections in Lightroom. |

| |||||||

| Mouse over the links below to compare images. (Just discovered Blogger supports inline javascript - more posts with this feature in the future)

|

Great blog. I just can't understand how you are not followed by lots of photographers! Outstanding! Congratulations.

ReplyDeleteThanks Archerphoto. I appreciate the compliment. I'm not sure who reads my blog, but I do get pretty good traffic for it. Since I started this in January, I've had over 13,000 visitors, with 70% of them being new, and the other 30% repeat.

ReplyDeleteI try to keep it informative and interesting when I can.

Cheers

Terry

I for one follow your posts and find them extremely informative. I also follow you in flickr. Thanks a million for sharing.

ReplyDeleteVery informative Terry. Thank you for an excellent read.

ReplyDeleteI haven't tried HDR Efex Pro, but used Photomatix. Its really hard to get the tone mapping (the HDR part of Photomatix) right, and get a natural looking HDR. I also have used layer blending (Auto Align, Auto Blend) option in Photoshop to work better in certain shots. Some times, I use Photomatix to get a HDR, use it as top layer add original bracketed images below it, set opacity differently to get more natural look. After reading this post now I need to experiment with LDR side.